Critical IDOR in a University System: A Case Study on Ethical Hacking and Student Data Exposure

A real-world IDOR vulnerability in a university API exposed private student data due to missing authorization and sequential IDs. This write-up explains the discovery, impact, root cause, and defensive lessons.

Disclaimer

This article is shared strictly for educational and defensive security awareness purposes.

All domains, endpoints, identifiers, and institutions are redacted or anonymized.

No real student data was extracted, stored, or misused.

Testing was performed only within an authorized context, following responsible disclosure practices.

Introduction

Security vulnerabilities are often associated with large tech companies or high-profile platforms.

However, educational institutions-despite handling vast amounts of sensitive personal data-are frequently overlooked.

In this case, a security researcher discovered a critical Insecure Direct Object Reference (IDOR) vulnerability in a university system that exposed private student information at scale. The flaw was simple, severe, and entirely preventable.

This write-up documents what was found, why it mattered, how it happened, and what lessons developers and institutions must learn.

What Is an IDOR?

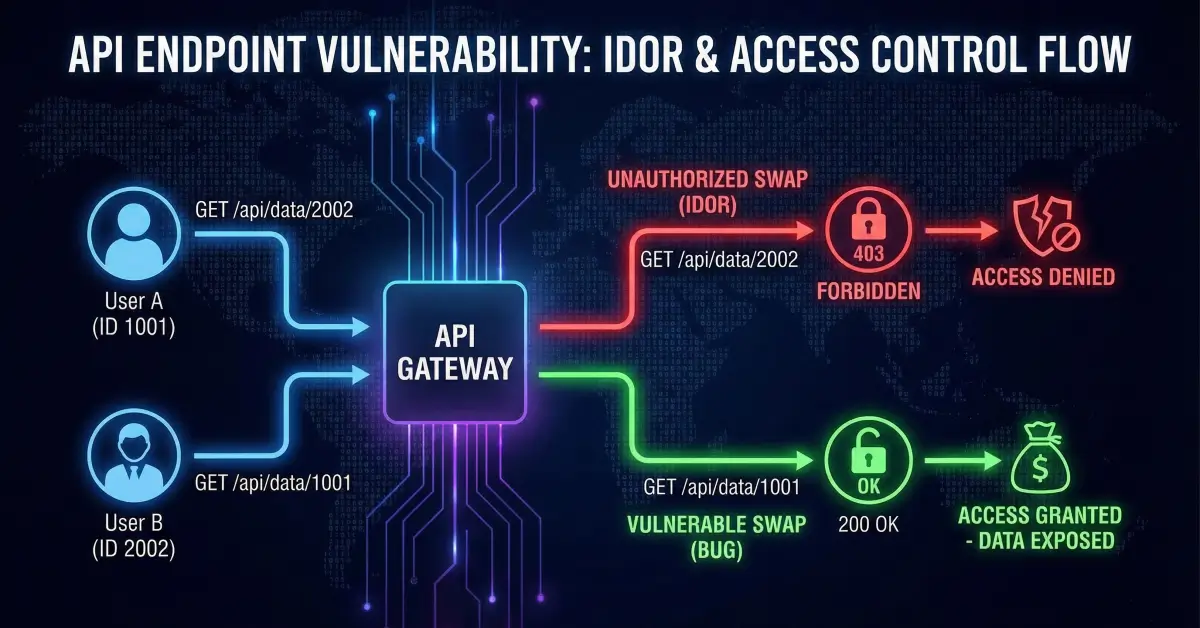

An Insecure Direct Object Reference (IDOR) occurs when an application exposes internal object identifiers (such as user IDs or document IDs) and fails to verify whether the requesting user is authorized to access the referenced object.

In plain terms:

If changing a number or identifier in a request gives access to someone else’s data - that’s an IDOR.

IDOR vulnerabilities are a subset of Broken Access Control, one of the most critical and common issues in modern web applications and APIs.

Discovery: From Normal Testing to a Serious Finding

The researcher began by performing routine endpoint testing and fuzzing, analyzing how the university platform handled different API requests. Most endpoints behaved correctly, enforcing authentication or returning access-denied responses.

One endpoint, however, behaved differently.

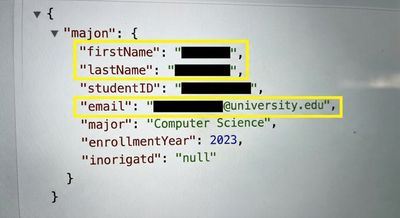

Unlike other pages that returned styled HTML, this endpoint returned raw JSON data containing a student profile. When accessed with the researcher’s own student ID, it displayed personal information as expected.

Curiosity led to a single test.

The numeric ID in the URL was incremented.

The response returned another student’s complete profile.

No authentication error.

No authorization failure.

No warning.

Just data.

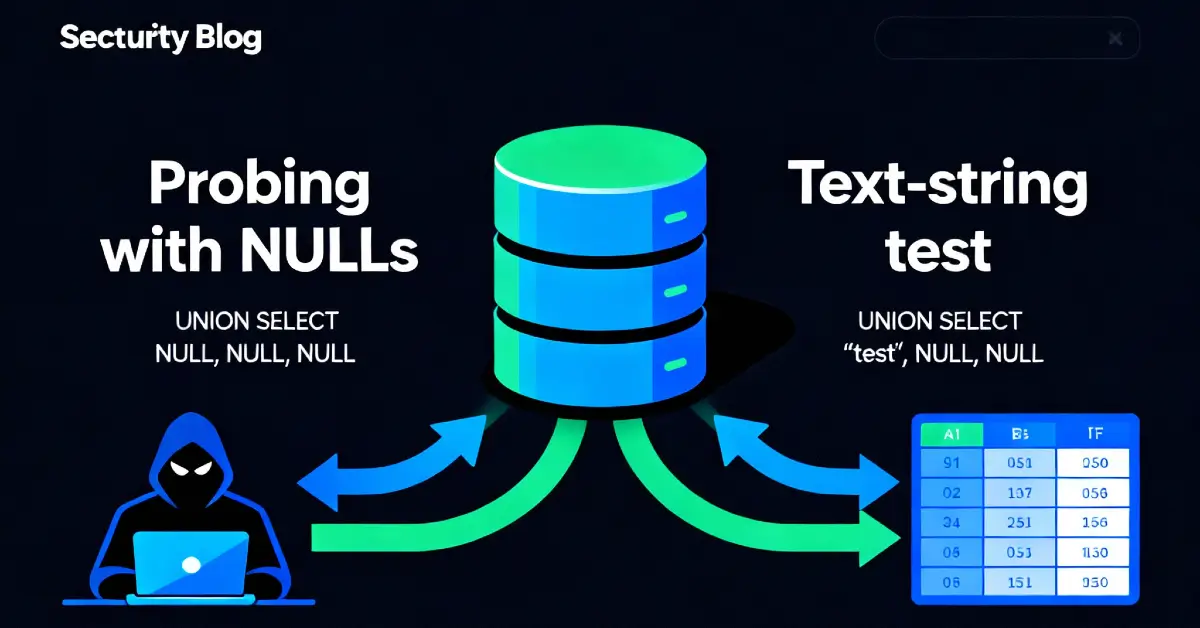

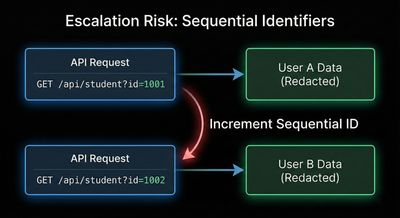

The Escalation Risk: Sequential Identifiers

The severity of the issue increased dramatically once a pattern became clear:

- Student IDs were sequential

- No authentication checks were enforced

- No authorization logic validated ownership

This meant the entire student database was theoretically enumerable.

A simple loop could retrieve thousands of student records:

Although this script was never executed beyond proof-of-concept, its existence highlights how trivial exploitation would be for a malicious actor.

Data at Risk

The exposed JSON responses contained highly sensitive information, including:

- Full names

- University email addresses

- Contact information

- Course enrollments

- Academic records

- Student identification numbers

- Historical academic data

This is not just personal data - it is regulated, protected information.

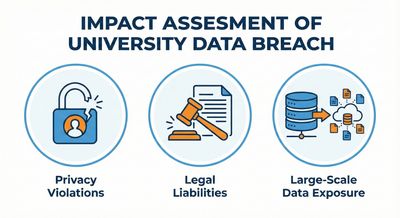

Impact Assessment

This vulnerability represented a catastrophic security failure.

Technical Impact

- Complete lack of access control

- Unauthenticated API data exposure

- Trivial mass enumeration

Privacy Impact

- Exposure of personally identifiable information (PII)

- Violation of student confidentiality

- Potential identity theft risks

Legal & Compliance Impact

- Serious GDPR violations

- Breach of educational data protection laws

- Institutional liability

Reputational Impact

- Loss of trust from students and faculty

- Long-term damage to institutional credibility

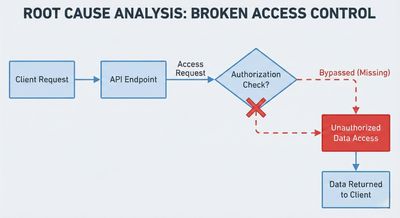

Root Cause Analysis

The vulnerability existed due to a combination of fundamental security failures:

- No Authentication Enforcement

The API endpoint was accessible without verifying user identity. - No Authorization Checks

The backend did not verify whether the requester was allowed to access the specified student record. - Sequential Object Identifiers

Predictable IDs made enumeration trivial. - Lack of Rate Limiting

No safeguards existed to detect or block automated access. - Environment Misconfiguration

Development-style endpoints were exposed in a production environment.

This was not a single mistake-it was a chain of missing controls.

Responsible Disclosure and Ethical Conduct

The researcher followed ethical security practices throughout the process:

- Testing stopped immediately after confirming the vulnerability

- No large-scale data extraction was performed

- No third-party data was stored or shared

- A detailed responsible disclosure report was prepared

- Remediation guidance was included for the institution

This approach ensured student safety while still enabling the institution to fix a critical flaw.

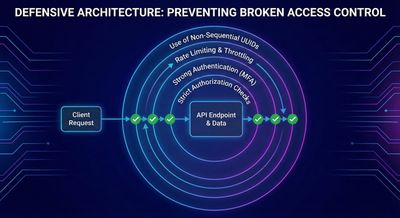

How This Should Have Been Prevented

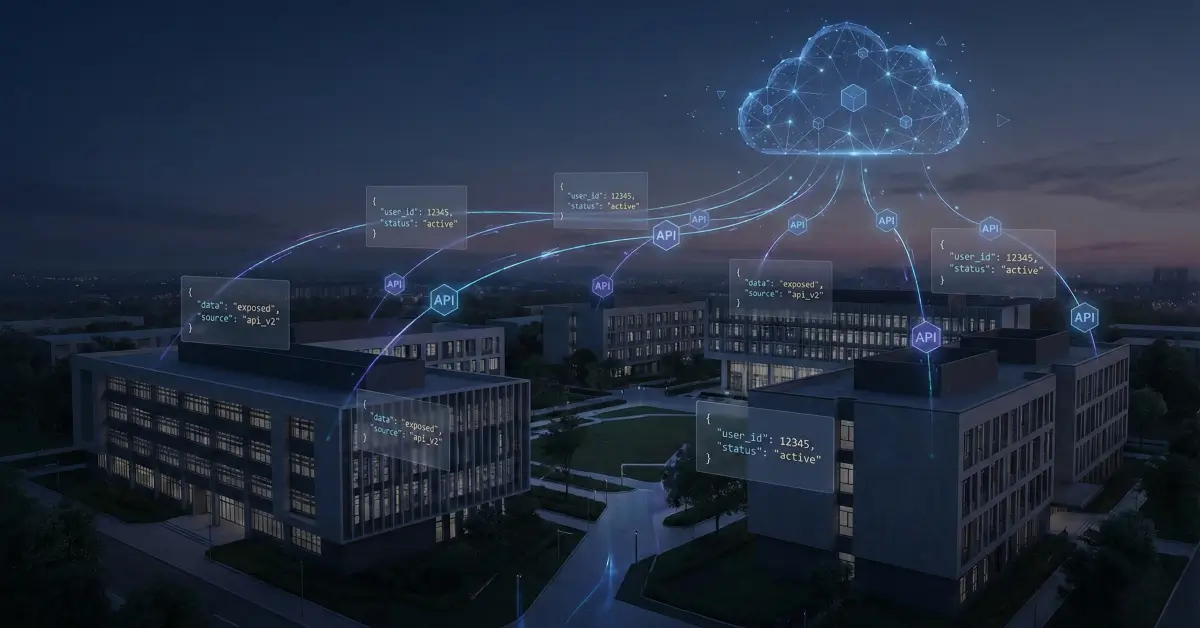

Educational platforms must treat APIs as first-class attack surfaces.

Required Fixes

- Enforce strong authentication on all API endpoints

- Implement object-level authorization checks

- Replace sequential IDs with UUIDs or opaque identifiers

- Apply rate limiting and anomaly detection

- Regularly audit APIs in both development and production

- Conduct periodic penetration testing and security reviews

Access control must be enforced server-side, every time, without exception.

Why This Case Matters

Universities store some of the most sensitive personal data imaginable - identities, academic histories, and future career paths.

Yet many educational systems lag behind in security maturity.

This case demonstrates that:

- Severe vulnerabilities don’t require advanced exploits

- Simple logic flaws can lead to massive breaches

- Ethical hackers play a critical role in protecting users

- Responsible disclosure saves institutions from real harm

Key Takeaways

- IDOR vulnerabilities are still widespread

- APIs require the same security rigor as web apps

- Sequential IDs are a known and dangerous anti-pattern

- Authentication alone is not enough - authorization matters

- Ethical hacking protects real people, not just systems

Final Thoughts

This vulnerability did not require zero-days, race conditions, or advanced exploitation techniques. It required one question:

“What happens if this ID isn’t mine?”

By asking that question responsibly, a serious breach was prevented.

For developers, the lesson is clear:

Never trust client-controlled identifiers. Always verify access.

For security researchers:

Methodical testing, careful observation, and ethical conduct continue to make the digital world safer - one bug at a time.

References

- OWASP Top 10 – Broken Access Control

- OWASP API Security Top 10 – BOLA / IDOR

- GDPR Articles 5 & 32 – Data Protection

- NIST SP 800-53 – Access Control

- PortSwigger Web Security Academy – IDOR