Modern Recon: How AI Amplifies Vulnerability Hunting

A practical guide to combining classical reconnaissance tooling with LLMs to accelerate triage, generate payloads, and prioritize targets - responsibly and effectively.

Disclaimer

This article provides defensive, educational guidance for authorized reconnaissance and vulnerability research. All examples are intended for use within legal scope: bug bounty programs, authorized penetration tests, or private laboratories. Do not run intrusive or destructive scans against systems without explicit permission.

Introduction

Reconnaissance has evolved. Classic tools-such as Amass, Subfinder, httpx, and Nuclei-remain essential for asset discovery and vulnerability identification. Recent advances in large language models (LLMs) enable powerful automation: summarizing noisy outputs, generating focused payloads, tailoring wordlists and triage plans, and drafting reproducible reports. LLMs reduce repetitive effort and surface high-value leads, but human validation and ethical guardrails remain mandatory.

This guide presents a practical, tool-agnostic pipeline that combines traditional recon tooling with AI-assisted triage. It includes example scripts, safe prompt templates, and operational patterns for integrating LLMs into a recon workflow, with explicit caveats about hallucinations, false positives, and legal boundaries.

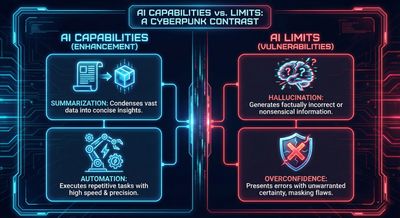

The modern recon thesis - capabilities and limits

Capabilities that AI brings to recon

- Summarization: convert thousands of lines of raw output into concise, prioritized assets.

- Payload generation: produce creative but safe test strings tailored to specific behaviors.

- Automation templates: produce wordlists, nuclei templates, or test orchestration scripts on demand.

- Report drafts: produce structured bug reports and remediation recommendations to accelerate triage.

Limits and pitfalls

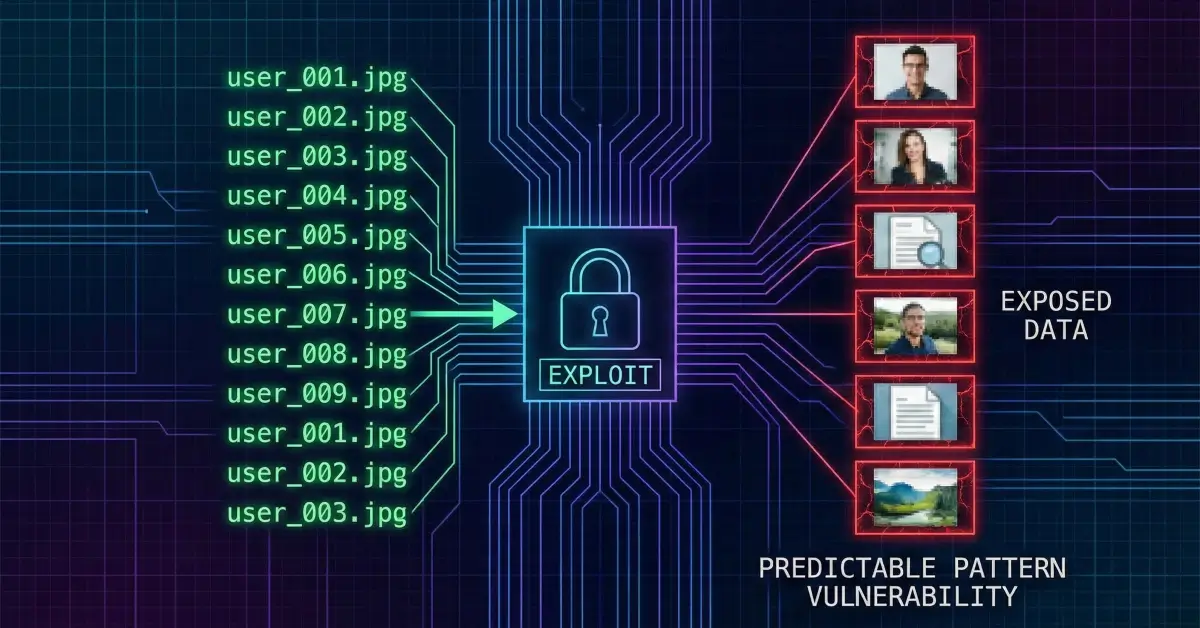

- Hallucinations: LLMs can invent endpoints or claims; every claim requires verification.

- Overconfidence: AI may present low-confidence inferences as facts-always check.

- Ethics & legality: automation does not change authority requirements; explicit permission remains essential.

Conclusion: LLMs amplify human analysts; they do not replace judgment.

High-level pipeline (human + AI)

The pipeline described below follows a human-in-the-loop approach. Each stage lists recommended tools and the role of the LLM.

1. Passive discovery (data collection)

Purpose: build a broad asset inventory.

Typical sources and tools:

- Certificate transparency:

crt.shqueries. - DNS and subdomain sources: Amass (passive), Subfinder, Assetfinder.

- Historical sources: Wayback Machine, Common Crawl.

- Public datasets: Shodan, Censys.

LLM role: normalize and deduplicate raw lists, classify hosts by apparent service type.

2. Active enumeration (validation)

Purpose: verify which hosts are alive and collect HTTP metadata.

Typical tools: massdns, masscan, httpx, nmap, zap/burp for targeted checks.

LLM role: parse httpx and nmap outputs to highlight unusual headers (e.g., CORS: *), sensitive endpoints, or admin paths.

3. Content & fingerprinting

Purpose: determine frameworks, API patterns, and likely parameter names.

Typical tools: httpx fingerprinting, ffuf/dirsearch, Wappalyzer-like probes.

LLM role: propose focused wordlists for directory fuzzing derived from page content and project names.

4. Vulnerability scanning (templated)

Purpose: run templated checks for common issues.

Typical tools: Nuclei, custom scripts, open-source scanners.

LLM role: adapt templates or suggest follow-up verification steps for hits.

5. AI-assisted triage

Purpose: condense raw outputs into prioritized findings.

Process: concatenate raw outputs into a single text blob and feed to an LLM prompt that requests JSON-formatted prioritized assets with verification steps.

LLM role: produce a short ranked list and verification commands to execute manually.

6. Manual verification & chaining

Purpose: human testers validate and attempt exploitation paths.

LLM role: propose plausible exploit chains, payload variants, and safe PoC strings, all requiring manual validation.

7. Report generation & automation

Purpose: generate initial report drafts and automate follow-up tasks (e.g., creating issues in a tracking system).

Tools: small automation scripts (Python/GitHub Actions) to create tickets for high-priority assets.

LLM role: produce report drafts to be reviewed and edited by the human analyst.

Core tooling cheat sheet (quick reference)

| Category | Tool | Purpose |

|---|---|---|

| Subdomain discovery | Amass, Subfinder, Assetfinder | Passive and active domain enumeration |

| DNS/port ops | massdns, masscan, nmap | DNS resolution, mass port scanning |

| HTTP probing | httpx | Live host verification and header collection |

| Fuzzing | ffuf, dirsearch | Directory/file discovery |

| Templated scanning | Nuclei | Fast vulnerability checks with templates |

| Interactive testing | Burp, ZAP | Manual testing and exploitation |

| Data sources | crt.sh, Shodan, Censys | Certificates and device discovery |

| LLMs | OpenAI, local LLMs | Summarization, payload generation, report drafts |

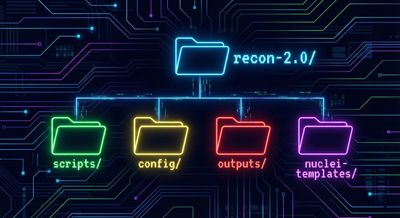

Example repo layout (recommended)

A small, maintainable repository helps standardize runs and reuse prompts.

Treat config/targets.txt as the single source of truth and parameterize all scripts to avoid accidental scans outside scope.

Practical scripts (templates)

run-recon.sh (orchestration)

The following shell script orchestrates discovery and templated scanning. Replace tool paths and options according to environment.

triage-with-llm.py (conceptual)

This is a conceptual example to demonstrate building a prompt and calling an LLM. Do not store API keys in plaintext.

Replace call_llm with a secure client for the selected LLM provider.

LLM prompt templates (copy-paste ready)

Provide the LLM with clear instructions, output constraints, and verification steps.

Prompt - Summarize & Prioritize

Use this to convert many lines of httpx and nuclei output into a JSON shortlist.

You are a senior web security analyst. Given the following raw reconnaissance output, do the following:

- Extract unique assets (subdomains, URLs, open ports).

- For each asset, provide a risk rating: High, Medium, Low, and 1–2 reasons.

- For High and Medium assets, provide a one-line verification command (curl or httpx).

- Output JSON array with fields: asset, risk, reasons[], verification. RAW: [paste raw httpx/nuclei outputs here]

Prompt - Generate XSS payloads (safe)

You are an experienced penetration tester. Generate 6 safe, non-destructive test payloads for reflected XSS for URL: https://example.com/search?q=. Explain briefly why each may trigger a reflection. Avoid using payloads that exfiltrate live cookies or perform destructive actions.

Prompt - Draft vulnerability report

You are a vulnerability reporter. Using the validated finding below, write a concise bug bounty report with: summary, impact, steps to reproduce (numbered, with curl when possible), PoC notes, suggested remediation (2–3 items). Keep language professional and actionable.

Example AI triage output (JSON)

When used correctly, the LLM should produce structured output similar to the example below. The analyst must verify each claim.

Practical prompt engineering tips

- Bound the model: require JSON output to facilitate parsing.

- Be explicit: ask for verification commands (curl/httpx) rather than general advice.

- Chain prompts: first ask to extract assets, then to prioritize.

- Ask for exact justification: request the snippet of raw output the model used to justify each claim to reduce hallucinations.

- Limit hallucination: instruct the model to only use provided data.

Automation patterns & CI ideas

- Nightly passive scans: schedule Amass/Subfinder jobs and store outputs in S3 or a repo.

- LLM triage job: run a triage job that consumes new outputs, produces JSON, and opens issues for High items.

- Human-in-the-loop gating: require manual approval before converting High findings into tickets.

- Rate-limit caution: schedule network-intensive jobs during allowed windows and follow program rules.

A sample GitHub Actions workflow might:

- Pull

targets.txt. - Run quick passive recon Docker containers.

- Upload results to an artifact store.

- Trigger a triage action that calls an LLM to produce JSON.

- Create draft issues for top items; assign for manual validation.

Ethics, legal boundaries, and safe operations

- Always obtain written permission: bug-bounty scope, signed authorization, or explicit consent.

- Avoid destructive tests: do not run destructive payloads, stress tests, or data-exfiltration techniques.

- Protect discovered PII: if sensitive data appears, redact and follow the program’s disclosure process.

- Do not share sensitive outputs: avoid public disclosure until fixed.

- Document everything: timestamps, tool versions, and exact commands used.

LLMs can aid reporting, but human reviewers must confirm accuracy and avoid accidental exposure of secrets or PII.

Quick reference commands (cheat-sheet)

- Subdomain enumeration:

- HTTP probing:

- Directory fuzzing:

- Nuclei:

Final checklist before reporting a finding

- Reproduce the issue at least twice in controlled conditions.

- Capture minimal, redacted evidence (curl commands, status codes).

- Estimate business impact (data exposure, downtime risk).

- Provide actionable remediation: configuration change, header addition, or code fix.

- If AI helped draft the report, verify all claims and remove any hallucinations.

Conclusion

Modern reconnaissance combines established scanning tools with LLM-assisted triage and templating. This combination increases throughput and focuses human creativity where it matters most: exploitation logic, ethical judgment, and remediation. Used responsibly, AI reduces time-to-find and improves report quality. However, every AI-suggested lead must be validated manually and operated under explicit authorization.