Unsafe eval() and DOM XSS: How a Single Line of JavaScript Can Compromise Everything

A deep defensive breakdown of how unsafe eval() usage led to full client-side compromise. Learn how to detect, prevent, and defend against DOM XSS at code and architecture level.

Disclaimer (Educational Use Only)

This post is for educational and defensive purposes. It explains how unsafe JavaScript practices - specificallyeval()- can lead to client-side execution vulnerabilities like DOM XSS.

No live exploitation or unauthorized testing is demonstrated. Always test responsibly in authorized labs or self-owned systems.

🧩 Introduction

In the world of web application security, some vulnerabilities refuse to die. SQL injection may dominate the backend, but DOM-based Cross-Site Scripting (DOM XSS) remains the silent killer on the frontend.

And at the heart of many of these attacks lies a single, deceptively simple function:

eval().

Developers love it for its convenience. Attackers love it even more for its chaos.

This case study - based on a real research finding - explores how one vulnerable line of JavaScript inside a production app turned a harmless feature into a full-scale security disaster.

But more importantly, we’ll dissect how to defend against it using real-world techniques, secure coding standards, and automated detection.

Understanding the Vulnerability: Why eval() Is So Dangerous

The eval() function in JavaScript executes any string as code. That sounds powerful - and it is - but it’s also one of the biggest anti-patterns in modern web development.

Let’s see why.

⚙️ The core issue

When you call:

You’re literally asking the browser to interpret the string as code. If that string is influenced by any user-controlled data, it becomes an open door for code injection.

The moment untrusted input enters eval(), the attacker effectively gets a JavaScript Remote Code Execution (RCE) inside the browser - also known as DOM-based XSS.

🧠 The developer’s mistake

A developer wrote something like this:

The intention?

To dynamically execute user actions returned by an API.

The result?

A silent, client-side vulnerability waiting to be abused.

Step 1 – Recon: Finding the Needle in the Minified Haystack

The researcher began with classic reconnaissance:

Hours of scanning led to a clue - a JS bundle that looked suspiciously dynamic:

client-core.bundle.js.

Opening it in Burp and VS Code revealed something horrifyingly simple:

No sanitization. No validation. Just blind trust in API data.

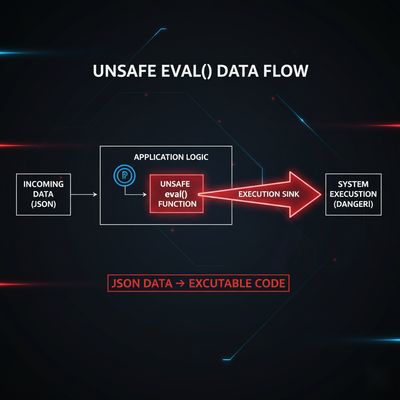

Step 2 – The Sink: Turning Data Into Code

This is what we call a sink - a point in the code where data enters a dangerous function.

Here, eval() is the sink.

userData.action is the source (the API response).

So if the attacker can manipulate userData.action, they can run any arbitrary JavaScript.

Even if the API itself is safe, a man-in-the-middle, CSP misconfiguration, or proxy-level tampering can modify responses - and suddenly every visiting browser becomes a victim.

Beginner Breakout 🧩

Sink: The function or location where untrusted data gets executed.

Source: The point where user data enters the system (forms, API, query params, etc.).

DOM XSS: A type of cross-site scripting where malicious payloads are executed entirely on the client-side (browser) without reaching the server.

Step 3 – Confirming the Behavior

In a safe, local test setup, the researcher simulated the vulnerable behavior:

Upon interception, they modified it to:

Result: the browser executed the payload.

No backend logic. No server logs.

A classic DOM XSS via unsafe eval.

Step 4 – Why This Matters: Beyond alert(1)

“alert(1)” may be the meme of XSS proofs, but real attackers don’t stop there.

Unsafe eval() opens doors to:

- 🥷 Session hijacking: Steal cookies, tokens, or credentials from localStorage.

- 📦 Data exfiltration: Extract JWTs or API keys cached in frontend apps.

- 🕵️♂️ Silent persistence: Inject backdoors through dynamic script tags.

- 🌐 Cross-domain exploitation: Execute malicious payloads within the trusted site’s context.

Step 5 – Real-World Exploitation Chain (Conceptual Overview)

In a controlled test, a malicious payload could:

-

Fetch sensitive session info:

-

Access localStorage:

-

Load remote malicious JS:

Now combine that with session cookies and JWTs in localStorage, and attackers can hijack user sessions, impersonate accounts, or even interact with backend APIs directly.

Step 6 – The Defensive Perspective

Instead of focusing on the exploit, let’s see how defenders and developers should detect and fix such patterns before they become public.

🔍 1. Detecting eval() in codebases

Search for dangerous patterns in your source and build pipelines:

Automate this with tools like Semgrep, SonarQube, or CodeQL for static analysis.

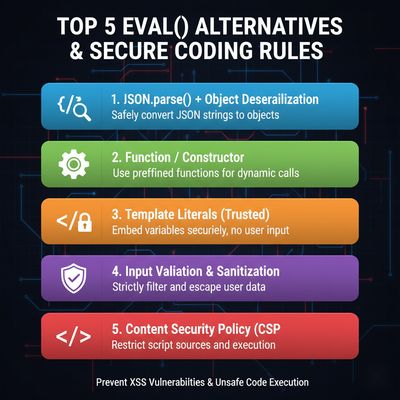

🧩 2. Using safer alternatives

In almost all cases, eval() isn’t needed. Use:

🧱 3. Implement action whitelisting

Instead of executing arbitrary code, map allowed actions:

🔐 4. Enable strict CSP

A Content Security Policy can block dynamic script execution altogether:

🧰 5. Add security headers

X-Content-Type-Options: nosniffX-Frame-Options: DENYReferrer-Policy: no-referrerPermissions-Policy: geolocation=(), camera=()

Step 7 – Detecting DOM XSS in Production

You can’t fix what you don’t see.

Add runtime instrumentation and reporting:

- CSP violation reports (

report-uri) - Browser extension monitoring

- Burp’s DOM Invader (safe testing only)

- CI/CD checks using dependency scanning

Step 8 – Developer Education: The Root of Prevention

DOM XSS isn’t just a technical failure - it’s a developer culture problem.

Most developers don’t use eval() maliciously; they use it for shortcuts, rapid prototyping, or old habits. That’s why training and secure code reviews matter more than any tool.

Create internal guidelines:

- ❌ Never use

eval()ornew Function() - ❌ Avoid dynamic script injection

- ✅ Use templating frameworks (React, Vue) that auto-escape values

- ✅ Lint for unsafe DOM APIs

Even one saved line of code can save an entire company from a breach.

Defensive Perspective (Detailed)

✅ Why this bug existed

The developer trusted API data implicitly. They assumed it would always be safe because only the server provided it.

But security breaks when assumptions do.

APIs change. Third-party integrations get compromised. Network tampering happens. That’s why “trust, but verify” doesn’t work in security - always distrust data.

✅ How defenders can detect such bugs early

- Use CSP reports to detect script execution anomalies.

- Run static code scans on every commit to block unsafe functions.

- Integrate dependency scanners to catch known vulnerable libraries.

✅ Enterprise mitigation strategy

Large orgs should define frontend security baselines:

- CSP enforced globally

- SAST scanning for JS sinks

- XSS regression tests post-build

- Security code ownership per module

Troubleshooting & Pitfalls (For Security Teams)

❌ “We removed eval, so we’re safe now.”

Not necessarily.

setTimeout("someCode", 1000) or new Function() are equivalent dangers.

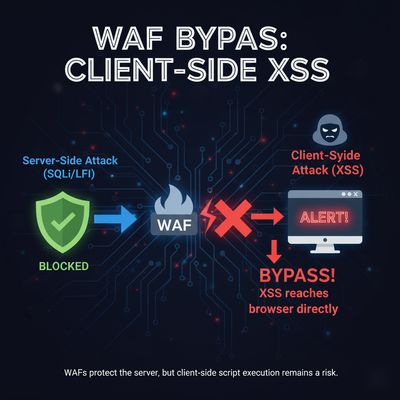

❌ “Our WAF will stop XSS.”

Nope.

DOM XSS happens inside the browser, after WAF filtering.

It’s invisible to traditional firewalls.

❌ “The API is internal, not public.”

Still unsafe.

Internal APIs can be intercepted, leaked, or manipulated via other vectors.

✅ “We sandbox third-party scripts.”

Good - but remember that your own code is often the weakest link.

Real-World Examples: Companies Burned by eval()

- 2019 – Shopify third-party app:

Used eval() in embedded scripts; resulted in customer data exposure. - 2021 – Chrome extension ecosystem:

Dozens of extensions caught executing eval() with remote payloads. - 2023 – Financial dashboard vendor:

A misconfigured API returned script content inside JSON. Users’ JWT tokens leaked through DOM XSS.

These incidents prove a pattern: unsafe eval is not rare - it’s everywhere.

Step 9 – Preventing Future Eval-like Issues

🧩 Introduce Code Policies

In your .eslintrc:

🧠 Security Champions Program

Educate developers to spot DOM sinks, not just backend vulns.

Train on CSP, React sanitization, and automated XSS detection.

🛡️ Regular Security Audits

Perform quarterly frontend audits - even mature products regress.

🔍 Monitor JS Integrity

Subresource Integrity (SRI) ensures external scripts can’t be tampered with:

Final Thoughts

The eval() vulnerability teaches a timeless lesson:

In JavaScript, the smallest mistake has the biggest consequences.

This isn’t about scaring developers. It’s about awareness.

Modern frontend frameworks, CI/CD security gates, and static scanners make avoiding this simple - but only if you care enough to enforce them.

Never underestimate one insecure function.

Because as this case proves, one line of JavaScript can compromise an entire platform.