Meta Spark AR RCE: Package Postinstall Remote Code Execution

Two Spark AR RCEs - a package postinstall RCE via arexport packages and a ZIP path-traversal RCE - analysed with safe PoCs and remediation.

Disclaimer

This post is for defensive, educational, and research purposes only. All testing described was performed by the original researcher in a legal context and responsibly disclosed to Meta / Facebook. Do not attempt these actions against systems you do not own or do not have explicit authorization to test.

Introduction

Spark AR Studio (Meta) historically accepted packaged projects and libraries which can be installed into the editor. Two independent but related real-world findings demonstrate how client tooling and packaging can become a vector for remote code execution (RCE):

- A package postinstall RCE discovered by inspecting installed AR library packages inside a saved

.arexportproject (this report earned the researcher a $2,625 reward). - A ZIP path-traversal RCE previously discovered in Spark AR that abuses ZIP extraction to write files to arbitrary locations and influence Node/Yarn behavior (paid $2,500).

Both findings are excellent demonstrations of supply-chain and local toolchain risks: when local tooling (Spark AR Studio) extracts or executes content from third-party packages, an attacker who can influence package contents can escalate that to code execution on the developer’s host.

This blog explains both findings, safe reproduction steps (lab only), root causes, and pragmatic mitigations for application developers and platform engineers.

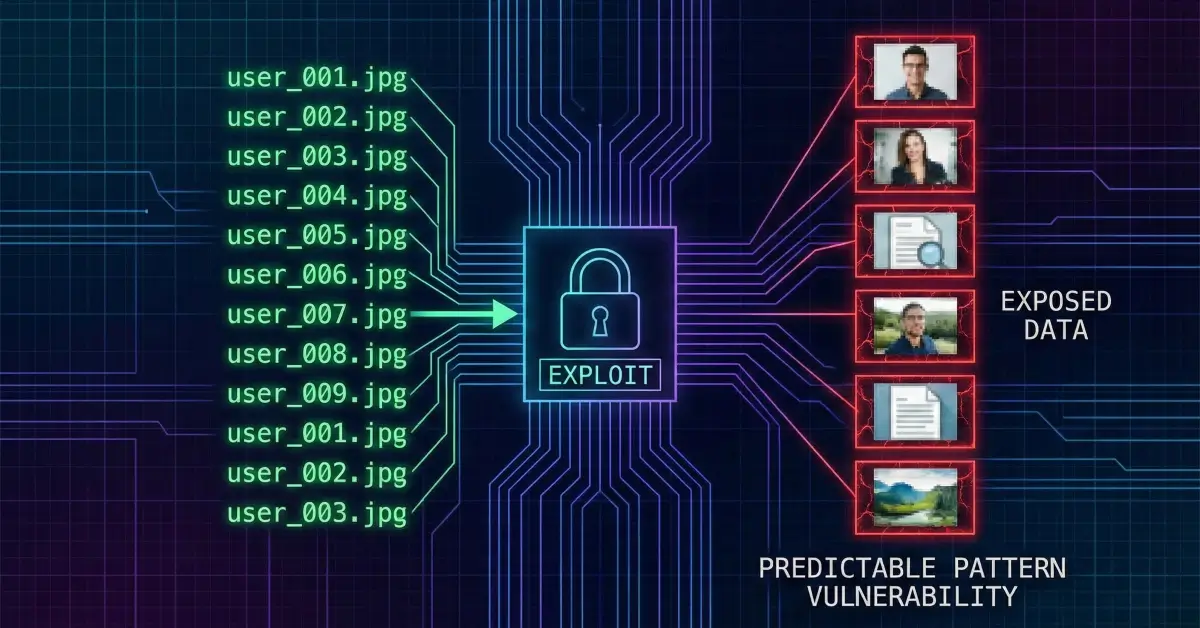

Quick summary of the two findings

- Package postinstall RCE (arexport packages): Installed AR library packages included a nested ZIP (kitchensink.zip) containing a

package.jsonwith lifecycle scripts (postinstall/prepare). Spark AR’s install flow triggered Node package installation which executed lifecycle scripts, enabling arbitrary JS execution (the PoC ran calc.exe on Windows). - ZIP path-traversal RCE (earlier report): Spark AR extracted ZIP-based project files to disk without sanitizing embedded paths. Attackers used directory traversal in ZIP entries to write files to known locations (e.g.,

%PUBLIC%), then manipulated Yarn/Node behavior (via.yarnrcandyarnPath) so Node would execute attacker JS.

Why these are important (threat model)

- These are local code execution vulnerabilities that affect users who open attacker-controlled projects or install malicious library packages.

- They are supply-chain adjacent: attackers who can publish packages (or trick a user into installing a crafted package) can trigger code locally.

- Impact ranges from developer workstation compromise to distribution of malicious AR effects if CI/build systems process these packages.

Threat actors that benefit: malicious library authors, social-engineering attackers who trick authors into opening crafted projects, and insiders who can upload packages to private libraries.

Technical deep dive - Package Postinstall RCE (arexport method)

How the researcher found it

- Installed a package from the Spark AR library into a project.

- Saved the project as a

.arexportfile. (.arexport and other Spark AR project files are ZIP-derived bundles.) - Opened the archive with 7-Zip and inspected contents. A folder

internalcontainedkitchensink.zip. - Inside

kitchensink.zipthe researcher found apackage.json(Node package manifest). Knowing Spark AR invokes Node while loading projects, they asked: if Node runsnpm install(or similar) inside package folders, will lifecycle scripts run?

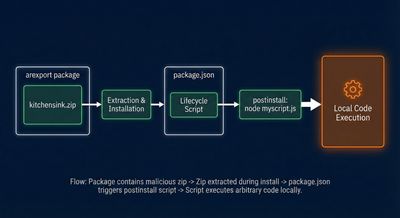

The abuse

package.json supports lifecycle scripts like postinstall and prepare. If a process executes package installation, these scripts run automatically.

The malicious package.json looked conceptually like:

If myscript.js is included in the same package, the lifecycle triggers will execute it during install. The researcher added a simple myscript.js that launched calc.exe (safe visual PoC on Windows) - demonstrating arbitrary code execution on project open.

Safe reproduction outline (lab only)

- Create a test Spark AR project.

- Add a nested package folder containing

package.jsonandmyscript.js. - Repack as

.arexport(ZIP) and open in a controlled VM. - Observe Node lifecycle script execution (monitor new processes).

Example of the lifecycle command that ends up executed by Node/Yarn inside the host:

(Do not run this on a production machine - use an isolated VM.)

Root cause

- The platform unpacks third-party package content and triggers Node package installation (or otherwise executes lifecycle scripts) without restricting or sandboxing script execution.

package.jsonlifecycle hooks are designed for legitimate packages, but when the host tool executes them implicitly on user machines, they become an arbitrary code-execution vector.

Technical deep dive - ZIP Path Traversal RCE (previous Spark AR finding)

Overview

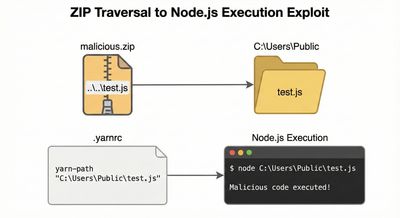

Spark AR project packages (arprojpkg / arexport) are ZIP files extracted to disk on open. A path traversal vulnerability in the extraction routine allowed malicious project archives to write files outside the intended extraction directory.

Attack outline

- Craft a ZIP entry with a filename that includes traversal sequences, e.g.,

../../../../Users/Public/test.js. - When the project is opened, the extraction routine writes

test.jstoC:\Users\Public\test.js(Windows example). - Write a

.yarnrcfile into a location Node reads (user home folder) withyarnPathpointing to the extracted JS. - Spark AR starts a Node/Yarn process that reads

.yarnrcand executes the referenced JS - producing RCE.

Example artifacts (conceptual)

Why this worked

- The extractor did not sanitize zip entry paths (allowed

..segments). - Node/Yarn reading of

.yarnrcproduced an implicit trust inyarnPath. - Required no privileged path or admin rights; public folders were used to ensure predictable paths.

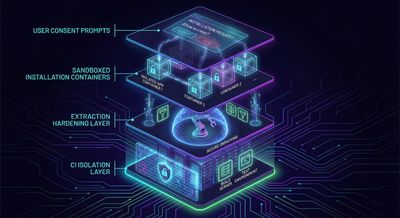

Defensive perspective - platform & developer mitigations

For Spark AR / similar desktop tooling

- Never execute third-party lifecycle scripts automatically. Treat package manifests as data, not code, unless explicitly authorized.

- Sandbox installs: If package installs are required, run them in a sandboxed environment (container or restricted process) with no direct access to the host file system.

- Extraction hardening: When extracting ZIP files, canonicalize paths and reject entries that resolve outside the intended directory. Reject absolute paths and

..components. - Whitelist-safe package types: Only allow known, vetted package structures or require packages to be verified authors before performing installs.

- Process isolation: Launch helper processes with least privilege (drop unnecessary environment variables, run with limited rights).

- User consent & visibility: Prompt clearly when a project contains executable scripts or package installation requirements - do not silently run them.

For developers / contributors using third-party packages

- Inspect packages before opening in local tooling - especially if they come from untrusted sources.

- Open untrusted projects in isolated VMs or disposable containers.

- CI hygiene: Ensure CI that processes user-submitted projects runs in strict isolation and rejects lifecycle hooks.

- Supply chain scanning: Add package manifest checks to pipeline scanners to detect lifecycle scripts.

Remediation checklist (practical items)

| Area | Action |

|---|---|

| Extraction | Canonicalize paths; reject entries with .. or absolute paths |

| Package execution | Disable automatic lifecycle script execution unless verified |

| Sandboxing | Run installs in containers with no host mounts |

| Vetting | Only run verified/whitelisted library packages automatically |

| User prompts | Ask explicit consent when running installs or scripts |

| Monitoring | Log and alert on unexpected Node/Yarn invocations during project open |

Troubleshooting & common pitfalls

- False negatives in ZIP sanitizers: Some naive sanitizers fail to handle Unicode/encoded traversal (

%2E%2E,\u002e\u002e). Normalize encoded characters before validation. - Relative vs absolute path handling: Different OSes treat separators differently - normalize

\and/and resolve to absolute canonical paths. - Yarn/Node config quirks:

yarnPathcan be relative - attackers may exploit base folder assumptions. Avoid trusting relative resolution behavior. - CI side-effects: Automated tests that open projects can be an attack surface if CI uses shared runners - isolate them.

Responsible disclosure & impact

- The researcher reported both issues to Meta/Facebook following responsible disclosure. Both reports were rewarded (the postinstall finding received $2,625).

- Even though Spark AR Studio is discontinued, these reports remain instructive: local tooling that processes user-provided packages must treat manifests and lifecycle hooks as executable surfaces and protect users accordingly.

Final thoughts

These Spark AR RCEs are textbook supply-chain / local execution problems: when a platform unpacks or installs third-party content on the user’s machine, lifecycle scripts and archive entries become part of the trusted computing base. The mitigations are straightforward but must be enforced: sanitize extraction paths, never run lifecycle scripts implicitly, and sandbox any required installations.

If you build developer tooling, package managers, or editors that accept third-party content, treat that content as hostile by default - assume the worst, and design a minimal-privilege, auditable handling flow.

References & further reading

- Yarn classic

.yarnrcdocumentation - ZIP path traversal canonicalization patterns (Gynvael Coldwind talks)

- PortSwigger and James Kettle research on archive-based and supply-chain bugs

- Spark AR historical advisories and the researcher’s previous posts