SSRF in ChatGPT Custom Actions Exposing Azure Metadata

A deep case study on how SSRF inside ChatGPT Custom Actions exposed internal Azure metadata, discovered by researcher SirLeeroyJenkins.

Disclaimer

This article is published for educational and defensive cybersecurity learning only.

Nothing in this post encourages misuse, unauthorized testing, or harmful activity.

All testing referenced here was performed in a permitted environment and responsibly disclosed to the vendor.

Readers must follow applicable laws and only perform security testing on assets they own or fully control.

Introduction

Every now and then, a vulnerability serves as a reminder that even the most advanced platforms can contain subtle weaknesses when new features meet complex cloud infrastructure. This case study highlights such a moment, centered on ChatGPT’s Custom Actions capability-a feature designed to let GPTs call external APIs.

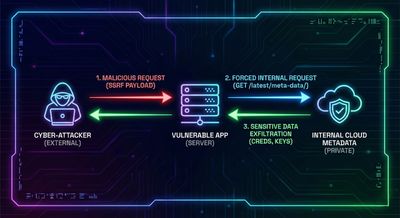

While this feature dramatically extends what custom GPTs can do, it also creates a bridge between user-controlled schemas and the platform’s internal request infrastructure. That bridge is exactly where researcher SirLeeroyJenkins noticed something unusual. What began as a simple curiosity quickly revealed an unexpected interaction, ultimately leading to a Server-Side Request Forgery (SSRF) flaw capable of reaching Azure’s metadata service-a critical internal endpoint used by cloud providers to deliver machine credentials.

The intention behind this article is not to dramatize the issue, but to document the full chain of events in a way that highlights the lessons, mitigations, and complexities involved when features accept user-provided URLs or schemas. ChatGPT’s engineering team patched the issue rapidly following responsible disclosure, but the underlying concepts remain important for all builders of modern AI-integrated applications.

Understanding the Setup

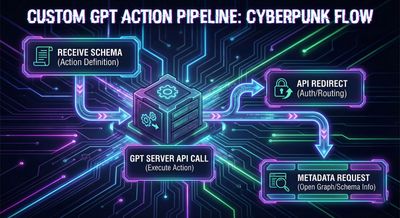

To appreciate the root cause, it helps to understand the structure of ChatGPT’s Custom Actions. A Custom GPT can be configured with:

- System instructions

- Additional knowledge

- Optional capabilities (browsing, image generation, etc.)

- And most relevant here: Add Actions, which lets a creator define external APIs via an OpenAPI schema

When a user submits a prompt, the model decides whether a defined action should be invoked. If so, ChatGPT’s server sends the request to the schema-defined API and receives the response.

From a security perspective, this creates a clear trust boundary:

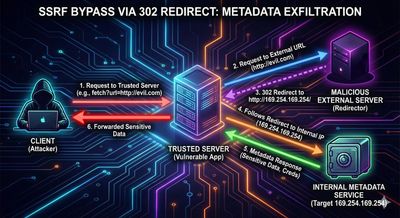

User-Defined Schema → ChatGPT Servers → External Endpoint

If the endpoint field inside the OpenAPI schema is not strictly validated, an attacker could potentially hijack this boundary to force the server to contact internal systems. That pattern is the foundation of SSRF.

Step 1 - Recon & Finding the Weakness

The researcher’s mindset began with a simple observation: Custom Actions allow a creator to provide an arbitrary API URL. Because ChatGPT servers make the outgoing request, those servers could become a proxy to internal resources under certain conditions.

The first experiment involved defining a harmless weather API to observe how the “Test” button worked. The interface displayed the expected behavior:

- A schema section

- Parameters for request format

- Fields for servers and paths

- Authentication options

Everything looked normal, and the expected test responses returned correctly. However, the critical insight came from noticing that ChatGPT itself executed the request to the API. That single architectural detail raised an important security question:

If a Custom GPT can specify an API endpoint, how restrictive is the validation on those endpoints?

With that question came an opportunity to explore SSRF.

Step 2 - Understanding the Vulnerability (Deep Dive)

Before unpacking what happened next, this section explains the vulnerability class used in the discovery.

What is SSRF?

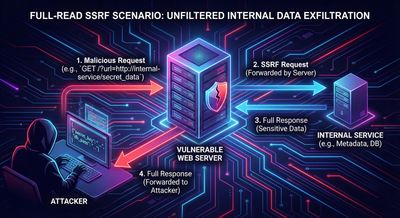

Server-Side Request Forgery (SSRF) is a vulnerability where an attacker tricks a server into making network requests on their behalf. Instead of the attacker directly contacting restricted endpoints, they compel the server-often with privileged internal access-to do so.

Two subclasses are essential here:

Full-Read SSRF

When the target server returns the internal system’s response to the attacker.

This allows direct data access.

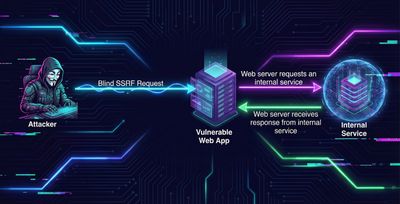

Blind SSRF

When the target server makes the request but does not return the response.

Even blind SSRF can enable:

- Internal port scanning

- Triggering internal API calls

- Side-channel detection based on timing

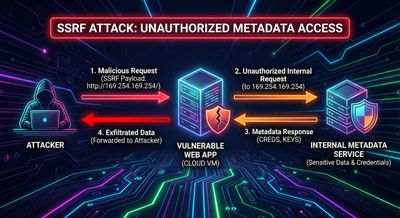

In modern cloud environments, the most important SSRF target is often the Instance Metadata Service (IMDS) at:

This endpoint exposes internal identity credentials used by cloud resources.

When an SSRF vulnerability can hit IMDS and read its output, the severity is almost always critical.

Step 3 - Building the Exploit

With SSRF in mind, the researcher attempted the most straightforward test:

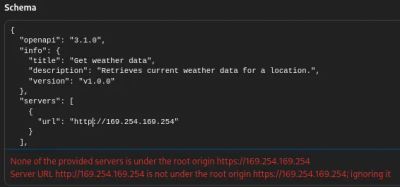

Set the API endpoint URL in the schema to point directly at the metadata IP.

Attempt 1 - Direct SSRF

The initial schema used a server entry like:

However, ChatGPT rejected it due to requiring HTTPS:

This was expected; most modern platforms block raw HTTP metadata URLs.

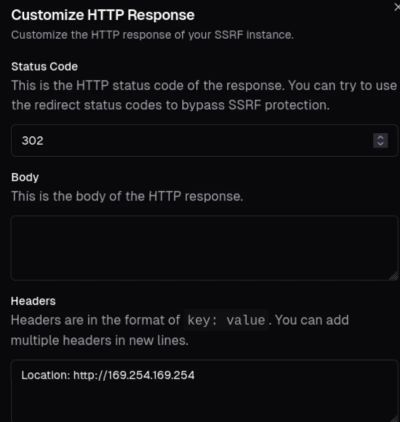

Attempt 2 - Bypass Using 302 Redirects

A classic SSRF bypass uses redirection.

If an application validates only the initial URL but follows redirects blindly, the redirect can point to the restricted endpoint.

To test this, the researcher used an SSRF testing server capable of sending custom responses with redirect headers (like tools based on Collaborator behavior but with redirect customization).

The redirect response was configured to:

This time, the schema pointed to a legitimate HTTPS host owned by the researcher. ChatGPT accepted the schema, and during the outbound request, followed the redirect internally-exactly as hoped.

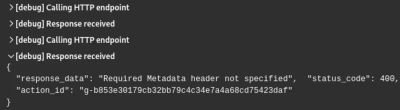

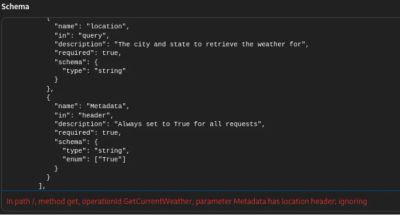

Attempt 3 - Resolving the Metadata Header Requirement

Azure requires all IMDS requests to include:

Without this header, IMDS returns an error.

ChatGPT’s Custom Actions interface initially refused to allow header definitions inside the OpenAPI spec:

However, the authentication settings allowed custom API key headers.

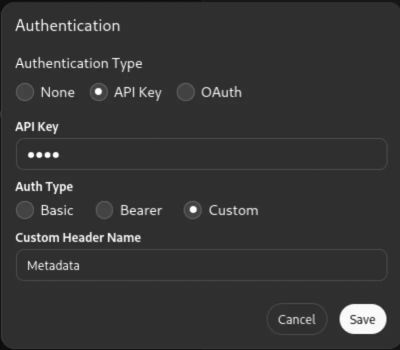

By selecting:

- Authentication Type: API Key

- Auth Type: Custom

- Custom Header Name: Metadata

- API Key Value: True

…ChatGPT included the required header in the outbound request.

This unlocked the final stage.

Step 4 - Executing & Confirming the Exploit

With all conditions met:

- HTTPS schema accepted

- Redirect followed

- Metadata header present

…the test action was executed again.

This time, the server returned a full Azure Management API access token, including:

- access_token

- client_id

- resource

- expires_in

- token_type

This confirmed that ChatGPT’s internal infrastructure was reaching Azure IMDS and returning the result to the user-classic full-read SSRF.

The researcher immediately reported the issue to OpenAI’s Bug Bounty team.

The engineering team patched it quickly and validated the fix.

Defensive Perspective (Detailed + Actionable)

The broader lesson is not limited to ChatGPT. Any system that:

- Accepts user-provided schemas

- Makes server-to-server requests

- Follows redirects

- Integrates cloud environments

…must defend against SSRF across multiple layers.

Below is a breakdown of recommended mitigations.

1. URL Allow-Listing

Restrict outbound destinations to:

- Verified domains

- Explicit IP ranges

- TLS-enforced endpoints

Avoid relying on input sanitization alone.

2. Block Link-Local and Private Ranges

Never allow access to:

- 169.254.0.0/16 (Metadata)

- 127.0.0.0/8 (Loopback)

- 10.0.0.0/8

- 172.16.0.0/12

- 192.168.0.0/16

3. Do Not Follow Redirects Blindly

Redirect-based SSRF bypasses are extremely common.

Follow redirects only for trusted domains.

4. Enforce HTTPS Validation at Every Hop

Redirects should be rejected if they drop to HTTP.

5. Require Strong Authentication

Ensure custom headers cannot be manipulated to satisfy internal endpoint requirements.

6. Cloud Hardening (Critical for IMDS)

Use:

- IMDSv2 (AWS)

- Metadata service restrictions

- Identity constraints

- Role scoping

Metadata must never be assumed inaccessible.

7. Comprehensive Logging

Identify:

- Unexpected outbound destinations

- Abnormal header patterns

- Metadata API access attempts

Centralize detection and alerting.

Troubleshooting & Pitfalls

Why Direct Metadata Access Failed First

The platform blocked HTTP URLs.

This is standard but insufficient where redirects remain unrestricted.

Why OpenAPI Header Injection Failed

The spec parser ignored headers outside expected patterns.

Why Authentication Headers Worked

The auth system allowed arbitrary names, indirectly enabling the needed Metadata header.

Why 302 Redirects Worked

Redirects were followed regardless of scheme changes.

Each of these behaviors is common in modern application stacks, making SSRF an easy class to overlook-even in advanced AI platforms.

Final Thoughts

This discovery illustrates the importance of combining curiosity with disciplined reasoning. ChatGPT’s Custom Actions are powerful, but their flexibility created an opportunity to connect user-controlled schemas with internal request logic.

The researcher, SirLeeroyJenkins, approached the system with an attacker’s mindset:

- Understand the boundaries

- Observe unusual behaviors

- Chain small weaknesses

- Verify assumptions

- Test without causing harm

- Document responsibly

The issue was promptly patched by OpenAI, showing strong security maturity. The broader message for developers is to treat server-initiated requests as a high-risk feature, especially when combined with user-controlled configuration or automation.

AI systems amplify the importance of foundational security principles. The more powerful the feature, the tighter the controls must be. SSRF remains one of the most impactful vulnerability classes in cloud environments, and this case reinforces that cloud metadata endpoints require strict, layered protection.

References

- OWASP SSRF Prevention Cheat Sheet

- Azure Instance Metadata Service Documentation

- OpenAPI Specification (v3.1.0)

- Researcher: SirLeeroyJenkins