How I Stole an AI’s Brain (Legally) - Model Extraction & Membership Inference Attacks Explained

A hands-on breakdown of a model extraction and membership inference attack against an inference API: how the chain works, why it matters, and how engineers can defend their ML systems.

Disclaimer (Educational Purpose Only)

This write-up explains research-level attacks (model extraction & membership inference) for defensive and educational use. Do not run these techniques against systems you do not own or have explicit permission to test. The goal here is to help engineers, product teams, and security practitioners understand the threat model and harden ML-powered APIs.

TL;DR - What happened in one paragraph

A researcher found an internal inference API (a 403 response). By fuzzing headers and crafting a trusted-cloud IP via X-Forwarded-For, the API returned full prediction responses. The researcher then used targeted queries (including high-confidence matches on rare images) to show the model leaked memorized training examples (membership inference). They automated thousands of queries to build a labeled shadow dataset and trained a local clone that matched the original API’s behavior with >95% agreement - effectively stealing the model and exposing training data. The issue was reported and fixed; the story is a cautionary tale for teams deploying ML models as services.

Why this matters (short answer)

Unlike classical data breaches (dumping a database), model extraction and membership inference let attackers recreate intellectual property and confirm whether a specific sample was part of the model’s training data. For companies selling ML models or relying on proprietary training sets, this breaks confidentiality, privacy, and revenue models.

1. Attack overview - the high-level chain

- Discovery - Find the inference endpoint (often

.../predictor.../classify). - Bypass access controls - Use header fuzzing (e.g.,

X-Forwarded-For,X-Original-URL) or origin tricks to flip a 403 → 200. - Probe outputs - Request predictions, preferably with options that reveal more information (full probability vector, confidences).

- Membership inference probing - Test whether specific inputs are memorized by the model (very high confidence on rare samples).

- Build shadow dataset - Query the API over large public datasets to collect (input → label/confidence) pairs.

- Train clone model - Use the collected pairs to train a local model that approximates the original.

- Verify & document - Compare clone vs API, measure agreement/accuracy; prepare responsibly disclosed report.

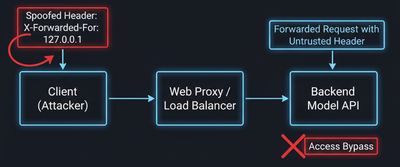

2. The discovery & bypass: header fuzzing and trusting cloud ranges

APIs often trust certain request headers or IP ranges (for internal/autoscaling agents). If a service naively accepts X-Forwarded-For without validation, an attacker can claim any client IP.

Example technique (defensive pseudocode - do not run against others):

If the server incorrectly trusts that header, the 403 may become 200 and return model outputs.

Defensive note: Never trust forwarded headers without verifying the immediate TCP peer or using a secure proxy that strips untrusted headers.

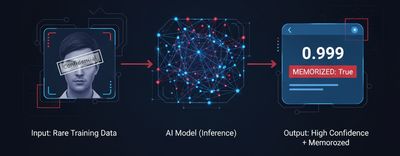

3. Membership inference - how to tell if a model 'memorized' a sample

A robust model generalizes - it gives sensible outputs across similar inputs but rarely outputs extremely high confidence for obscure, unique samples. If an API returns near-certain predictions for a very rare image (one unlikely to be in public datasets), that suggests memorization.

Example probing strategy (conceptual):

- Identify suspicious samples (rare images, internal docs, private photos).

- Send them to the model with

options: { return_full_probabilities: true }. - If probability for a single class is abnormally high (e.g., 0.999) while similar public images produce much lower confidences, suspect membership.

This is not proof on its own - but combined with other signals it becomes convincing evidence.

4. Model extraction (aka 'stealing' the model) - building the shadow dataset

Model extraction works because the inference API is effectively a labeling oracle. By querying the oracle over many inputs, an attacker obtains labelled pairs and can train a substitute model.

Key points:

- The richer the returned output (class probabilities, logits), the fewer queries needed.

- Targeted sampling: use diverse images (ImageNet, domain-specific corpora) to cover the model’s decision boundary.

- Sampling strategy matters: random sampling + active learning (query where the current clone disagrees most) speeds convergence.

Proof-of-concept loop (defensive illustration):

Training a local clone using TensorFlow/PyTorch on the dataset can produce a model with high agreement to the API - sometimes >95% for classification tasks.

5. Why API responses matter: probabilities, logits, and debug flags

APIs often include helpful features for developers - return_full_probabilities, top_k, or explain. These make model development easier but also dramatically reduce attacker query cost.

Risk levels (high → low):

- Full logits / full probability vectors (high risk)

- Top-K labels with confidences

- Single label only (lowest risk, but still usable)

Recommendation: minimize what you return in production APIs. If you must return probabilities, consider rounding or adding calibrated noise (differential privacy - discussed below).

6. Privacy risk: membership inference & training data leakage

Membership inference is a privacy attack: given a sample, decide if it was in the training set. This matters especially when training data contains PII, proprietary diagrams, or private images.

Consequences:

- Confirming whether a specific image or document was seen during training (privacy violation).

- Exfiltrating private content via model outputs (if memorized verbatim).

- Reputational and regulatory risk: GDPR/CCPA implications for training on personal data.

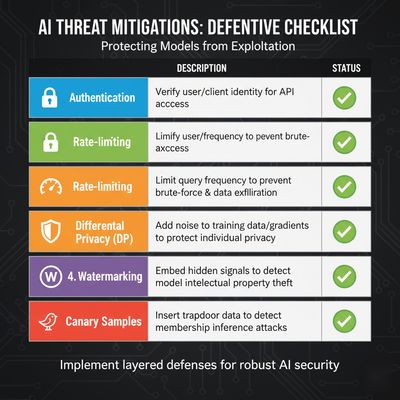

7. Defensive mitigations - engineering checklist

Below is a practical, prioritized list engineers can implement.

| Control | Why it helps | Implementation notes |

|---|---|---|

| Strict network-level header validation | Prevent header spoofing (e.g., X-Forwarded-For) | Strip or overwrite untrusted headers at ingress proxy |

| Authenticate & authorize clients | Prevent anonymous querying | Use mTLS / signed API keys and rate-limit per client |

| Minimize outputs | Reduce attack surface | Return only top-1 label; avoid probabilities/logits in prod |

| Rate limiting & anomaly detection | Detect bulk extraction attempts | Per-key quotas, burst protection, CAPTCHAs for suspicious patterns |

| Differential privacy | Prevent membership inference | Train with DP-SGD or add noise to outputs |

| Model access tiers | Separate dev vs prod capabilities | Dev APIs allow debug; prod is minimal and strictly monitored |

| Audit & logging | Forensic evidence & detection | Log client IDs, query patterns, and top-k results only |

| Watermarking / fingerprinting | Detect stolen models | Embed robust watermarking to later identify clones |

| Canary datasets & honeypots | Detect extraction via known samples | Include private sentinel examples and monitor for queries |

| Model distillation limits | Reduce leakage | Distill to smaller models with lower capacity to memorize |

8. Practical defenses explained

Authenticate & tie queries to an identity

Require authenticated clients with strong credentials or mutual TLS. Authentication allows per-client rate limits and better detection. It removes anonymous or unauthenticated oracles.

Reduce returned information

Avoid returning full probability vectors. If developers need it, provide it only in a separate dev environment behind authentication.

Rate-limit and monitor

Implement low query-per-minute budgets for each API key. Trigger alerts when a key requests thousands of predictions within hours, or when requests follow systematic sampling patterns.

Differential privacy & DP training

DP techniques add controlled noise during training so outputs don't reveal membership. Implementing DP during model training (e.g., DP-SGD) raises the bar for membership inference attacks.

Watermark & provenance

Watermark models using robust, black-box detectable watermarks. If a model clone appears online, you can prove theft.

Canary samples & monitoring

Plant a small set of secret samples (not public) and monitor if they are queried - a direct signal of extraction activity.

9. Responsible disclosure / triage checklist (if you find this)

If you discover such an issue responsibly:

- Stop active probing once you have minimal evidence.

- Triage locally - capture reproducible artifacts (request/response headers, timestamps, sample inputs & outputs).

- Redact any real sensitive content in PoCs.

- Report privately to vendor security contact / bug bounty program.

- Offer to help validate patch fixes in a lab.

- Avoid publishing full exploit details until vendor has mitigated.

10. Case study takeaways (from the presented write-up)

- 403 != safety. A 403 can be bypassed if header/IP trust is misconfigured.

- APIs are oracles. Every prediction returned to a client is information that can be aggregated.

- Memorization matters. High-confidence outputs on rare samples are red flags for memorized training data.

- Defenses are multilayered. Combine authentication, minimal outputs, rate limiting, DP, and monitoring for real protection.

Appendix - quick code snippets (defensive reference)

Strip untrusted headers at the proxy (Nginx example):

Simple per-key rate limiting (conceptual):

Training with Differential Privacy - high level

Final thoughts - the security model for ML services

Machine learning models are not just code: they are data-encoded intellectual property and privacy artifacts. Treat inference APIs like any sensitive service:

- Authenticate callers.

- Minimize information leakage.

- Monitor and rate-limit.

- Apply privacy-preserving training when handling sensitive data.

If you’re shipping an inference API, assume an attacker will try to query it at scale. Design the system accordingly.

Notes:

- Replace

<TARGET_API>placeholders when testing in an authorized lab. - Where code samples are included, use them only in controlled environments.